Have you recently come across discussions about AI agents?

As of 2025, AI agents have moved beyond theory into real-world business applications, transforming industries at scale. Major tech players are leading the way—Microsoft’s Copilot for Microsoft 365 has boosted productivity for routine tasks by 70%, while Google’s Duet AI has cut document processing time by 55%.

Organizations that adopt AI agents are seeing clear benefits: streamlined workflows, scalability, reduced training costs, fewer errors, and in many cases, monthly savings of up to $80,000.

The advantages of integrating AI agents into business operations are immense!

However, before jumping in, it’s important to understand the fundamentals: What is an AI agent? How do AI agents function? What are AI agents examples in real life?

In this blog, we’ll delve into these questions to provide a comprehensive understanding of AI agents and their impact on modern business operations. Let’s dive in!

AI agents are software programs that use artificial intelligence (AI) to perform tasks, make decisions, and solve problems on behalf of users. They can understand their environment, set goals, and take actions—often with some level of independence (autonomy).

These agents are capable of things like:

You’ll find the presence of AI agents in tools like chatbots, self-driving cars, recommendation systems, smart assistants (e.g., Siri, Google Assistant), and enterprise settings like IT automation or code generation.

AI agents are powered by large language models (LLMs), which is why they’re often called LLM agents. Unlike traditional LLMs that rely solely on pre-trained data and have limitations in reasoning and real-time knowledge, AI agents go a step further by using external tools in the background. This allows them to fetch current information, streamline workflows, and break down complex tasks into manageable subtasks—all without human involvement.

The AI agent framework typically involves 3 key stages:

AI agents function autonomously but rely on human-defined goals and parameters. Their behavior is shaped by 3 primary contributors:

Based on the user’s goals and the tools at its disposal, the intelligent agent creates a plan with tasks and subtasks to achieve the desired outcome.

AI agents make decisions based on the information they perceive from their environment. However, they don’t always have all the data needed to complete every step of a complex task. To overcome this, they rely on external tools—such as APIs, web searches, databases, or even other agents—to retrieve missing information in real time.

Once new data is gathered, the agent updates its internal knowledge and applies reasoning to reassess its plan and adjust as needed. This process of tool-assisted reasoning allows the agent to self-correct and make more informed decisions at every step.

For example: Imagine a user asks an AI agent to help choose the best laptop for video editing under $1,200. The agent may not have up-to-date product specs or pricing information. So it queries an e-commerce API to pull current listings, filters laptops based on performance benchmarks (e.g., GPU, RAM, CPU), and checks reviews. Still unsure which is best for editing software, the agent consults a separate agent trained in media production tools. It then combines this input to recommend a shortlist of options, explaining why each one fits the user’s needs.

This kind of reasoning, powered by real-time tool use, makes AI agents more adaptable and capable than standalone AI models.

AI agents enhance their performance over time by continuously learning from various feedback sources, including user interactions, other AI agents, and internal evaluations. This helps them deliver better results, match user preferences, and avoid past errors.

Given the previous example of helping a user select the best laptop for video editing. The agent stores details about which specs were prioritized (e.g., GPU performance, RAM size), which tools it used, and how the user reacted to its recommendations. If the user gives feedback like “I prefer Macs” or selects a different option than suggested, the agent will record that information for future tasks.

If multiple agents collaborated, such as one specializing in pricing trends, another in editing software compatibility, their feedback helps the main agent make better decisions in the future, even without human input.

This process of learning and improving, known as iterative refinement, allows the agent to build a stronger knowledge base and deliver more accurate, context-aware responses over time.

Read more: AI Personalization – The Future of Customer Experience (CX)

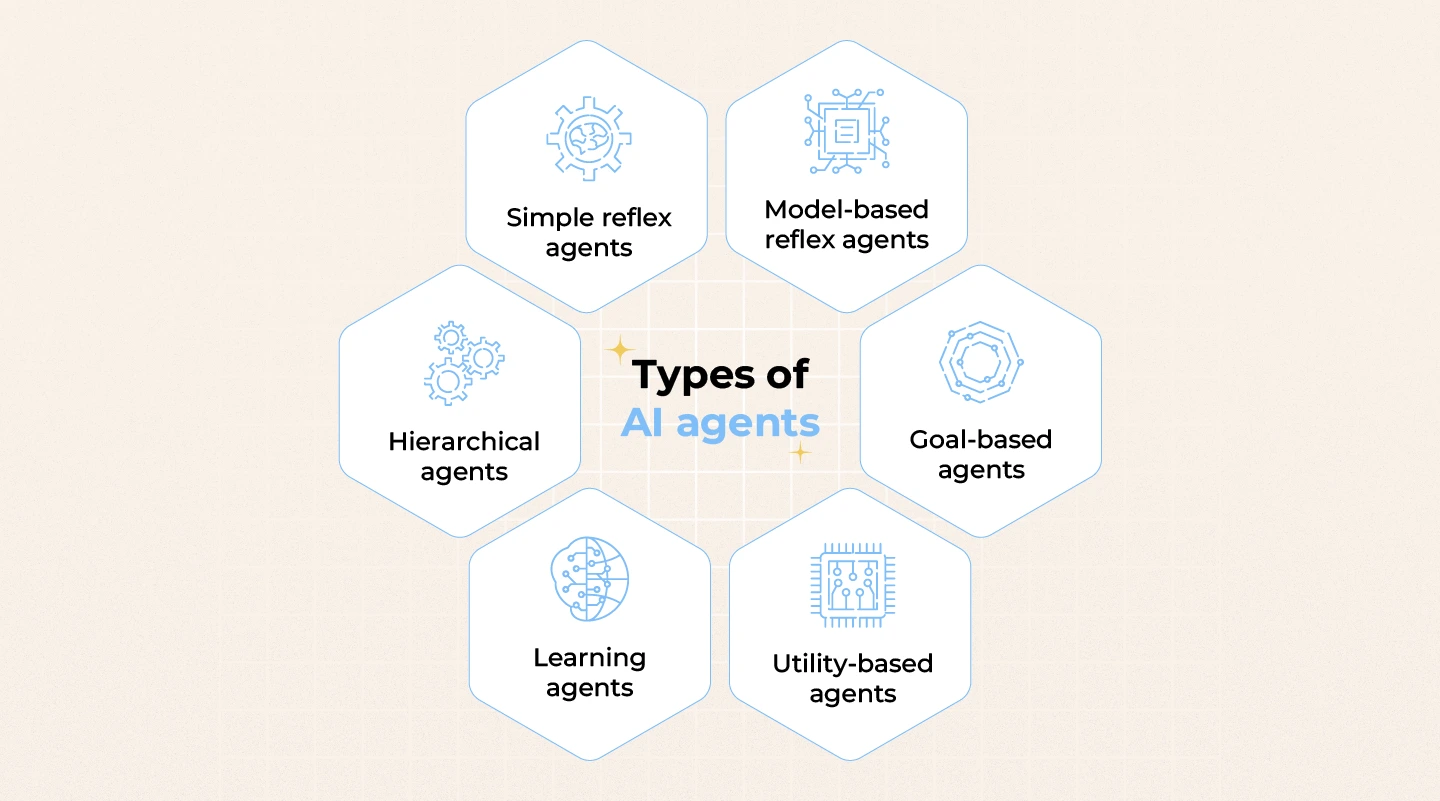

Simple reflex agents represent the most fundamental form of AI systems, operating based on direct input from their environment. These agents do not retain memory or learn from past interactions. Instead, they follow predefined rules that trigger specific actions in response to particular stimuli.

Due to their limited capability to process complex scenarios or adapt to unforeseen conditions, simple reflex agents are best suited for routine, low-complexity tasks.

Real-life examples:

Model-based reflex agents enhance basic reflex systems by integrating an internal model of the environment. This model enables them to monitor changes over time, even when some data isn’t immediately visible, allowing for more accurate and context-aware decision-making.

By combining real-time inputs with stored insights, these agents are better equipped to operate in complex, fast-changing business environments. This makes them well-suited for use cases where historical context and ongoing state tracking are critical to delivering smarter, more adaptive responses.

Real-life examples:

Goal-based agents are AI systems designed to operate with specific business objectives in mind. Rather than simply reacting to inputs, these agents evaluate potential actions based on how effectively each contributes to achieving a defined goal.

Their ability to prioritize and make decisions with purpose makes them ideal for tasks that require flexibility, reasoning, and long-term planning.

Real-life examples:

Utility-based agents take intelligent decision-making a step further by not only pursuing goals but also evaluating how “desirable” each outcome is. These agents are designed to assess various possible actions and select the one that maximizes overall utility, based on certain criteria like safety, speed, cost, or user preferences.

This approach allows utility-based agents to operate in more complex, dynamic environments where multiple outcomes are possible and trade-offs must be considered.

Real-life examples:

Learning agents represent a more advanced class of AI systems capable of improving their performance over time through experience. Unlike reflex-based agents, learning agents can adapt to new situations by collecting data, analyzing outcomes, and updating their decision-making strategies accordingly.

These agents are composed of 4 key components:

This structure allows learning agents to evolve continuously, making them ideal for complex, ever-changing environments.

Real-life examples:

Hierarchical agents are designed to handle complex tasks by breaking them down into smaller, more manageable sub-tasks. These agents operate across multiple levels of abstraction, where higher-level goals are decomposed into a series of lower-level actions or decisions. This structured approach allows them to maintain both strategic oversight and operational control simultaneously.

Real-life examples:

You may want to read more: Agentic AI vs Generative AI – Key Differences & Use Cases

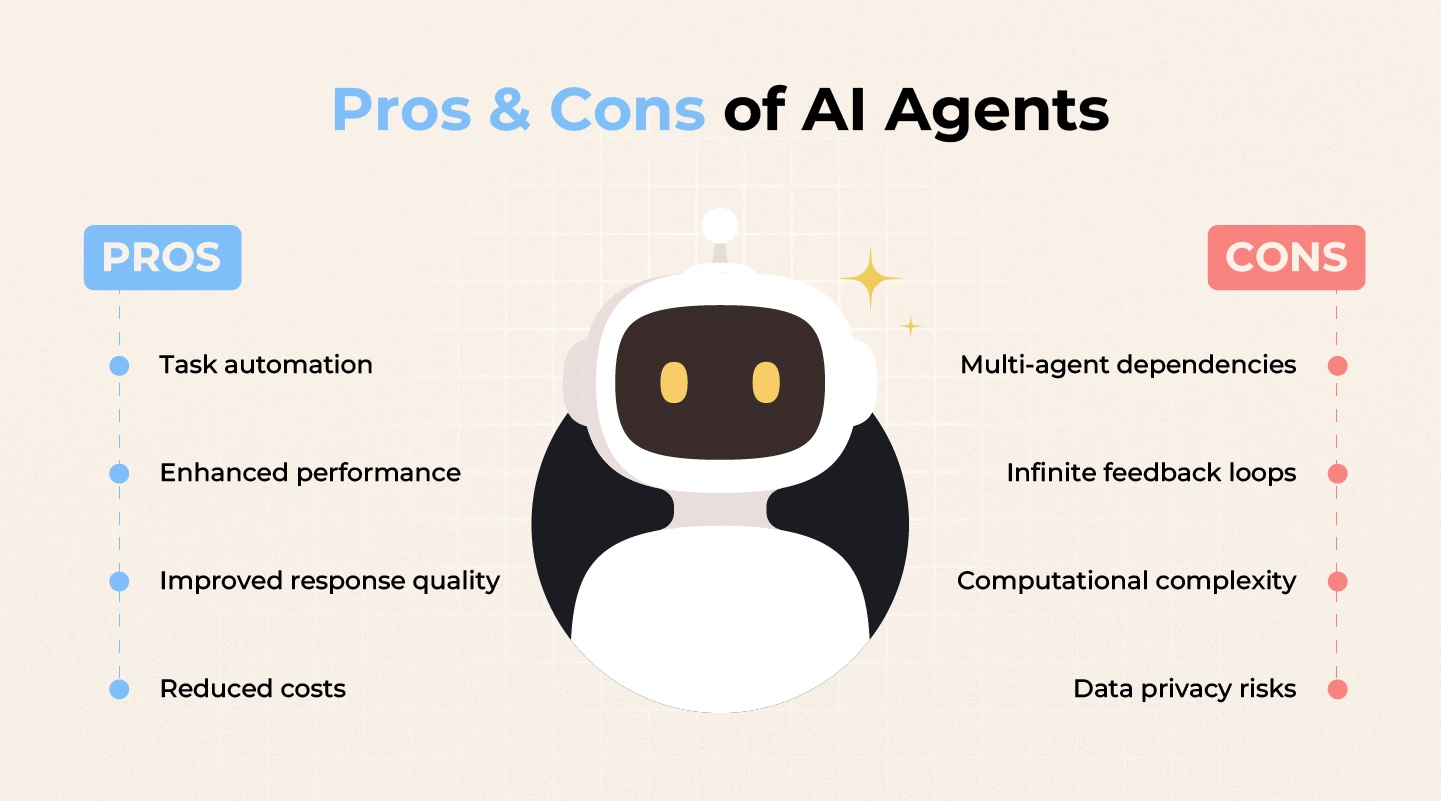

AI agents offer tremendous potential to streamline operations and enhance business efficiency. As such, adopting and integrating them into existing systems is becoming increasingly essential for organizations aiming to stay competitive.

However, the implementation process often comes with technical challenges — from data compatibility and scalability issues to technical debt and data privacy concerns.

To navigate these complexities effectively, partnering with experienced AI-driven design consultant as Lollypop Design Studio can significantly reduce risk and accelerate success.

Connect with Lollypop to schedule a FREE consultation for your AI integration journey!

An AI agents software comprises several core components:

These interconnected components enable AI agent platforms to perceive their environment, process information, make decisions, and learn from their experiences.

While both AI agents and AI chatbots utilize artificial intelligence, they differ in functionality and autonomy. AI chatbots are designed primarily for conversational interactions, providing responses based on predefined scripts or trained data. In contrast, AI agents possess the capability to autonomously make decisions, perform complex tasks, and adapt to new situations without constant human guidance.

Vertical AI agents are specialized AI systems tailored to operate within a specific industry or domain. Unlike general-purpose AI models, these intelligent agents focus on particular tasks or workflows, leveraging domain-specific knowledge to optimize performance.